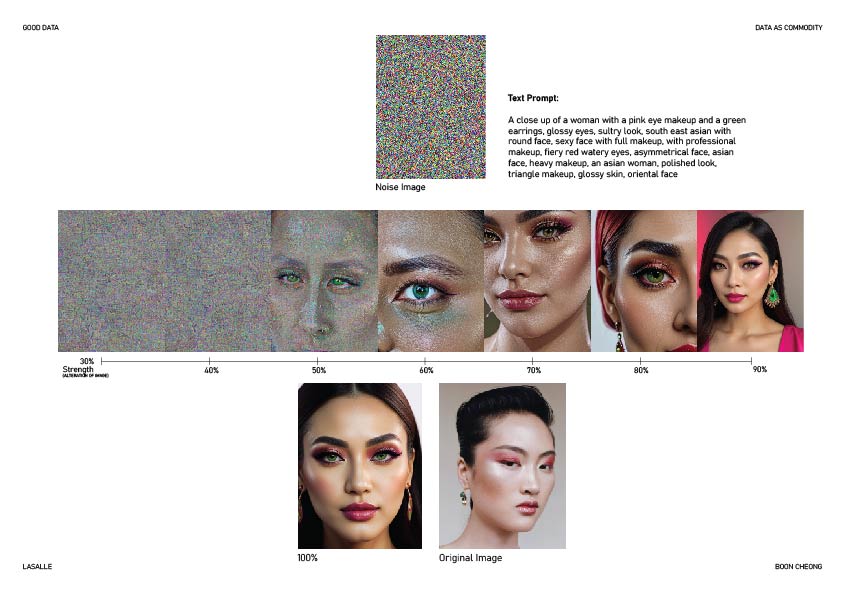

Experiment 1

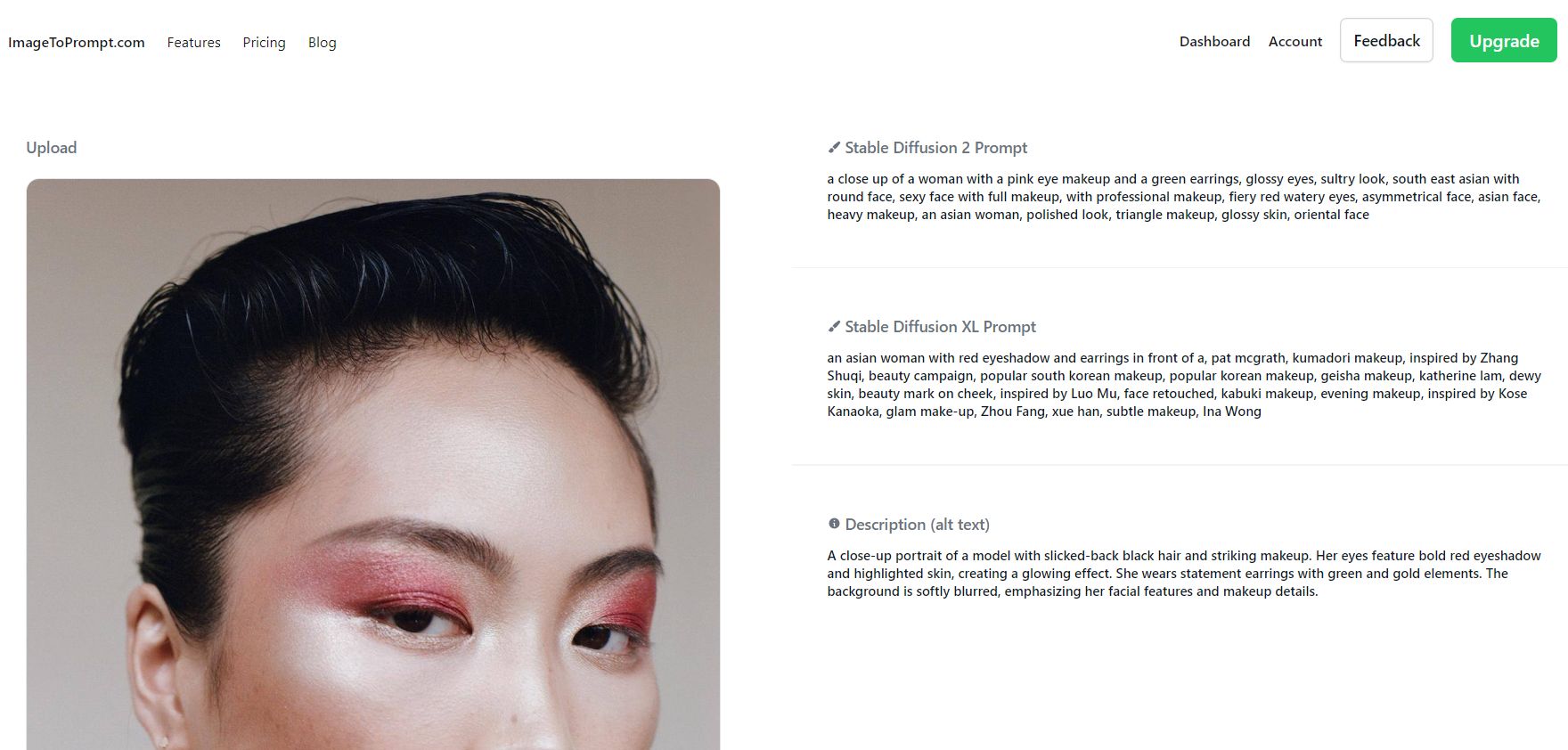

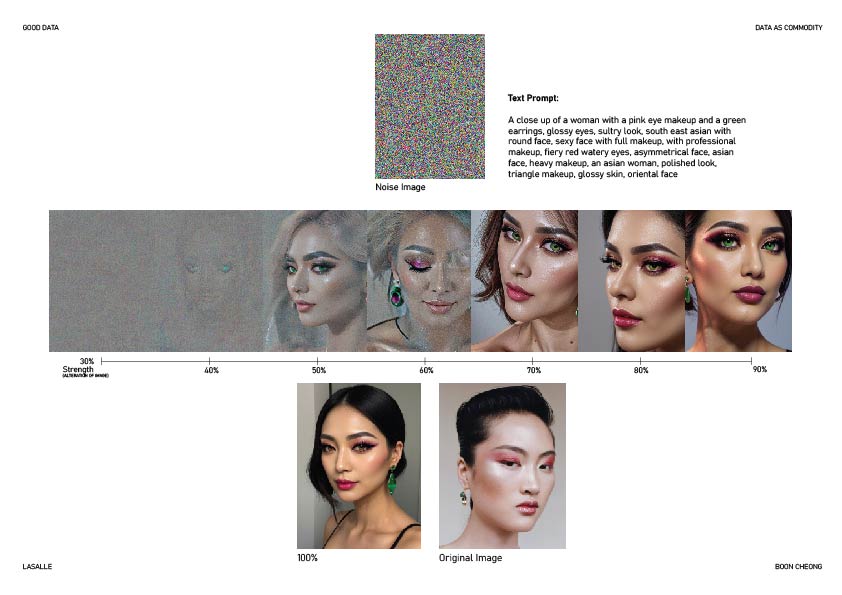

Diffusion models work by starting with a noisy image and progressively removing noise in a process called "denoising," guided by patterns learned during training and a text prompt provided by the user. This experiment investigates the impact of initial noise states on the accuracy of AI diffusion model outputs, with a focus on how noise compares to the influence of prompts in shaping the final image. Starting with an original image, noise is layered onto it before being input into an AI generator alongside a consistent prompt for reconstruction. Separately, a random noise image is generated and processed with the same prompt. The outputs from these scenarios are then compared to the original image using quantitative similarity metrics. The study aims to uncover whether initial noise or prompt semantics play a more significant role in the generation process, providing insights into the robustness and dependencies of diffusion models.

Program used: ChatGPT, DEZGO AI Image Generator

Method

By applying the 1st set of images, I will first use a real image and apply noise on top of it before bringing it over to DEZGO AI image generator. In DEZGO AI, there will be a slider to tweak how much strength for the change to the image provided, hence for the experiment, each output will have an increment of 10% each time starting from strength 30%. The reason being that the image below 30% strength change will still appear as a noise image.

Observation

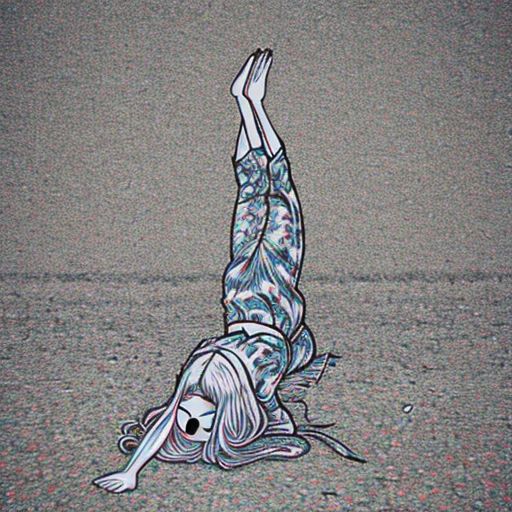

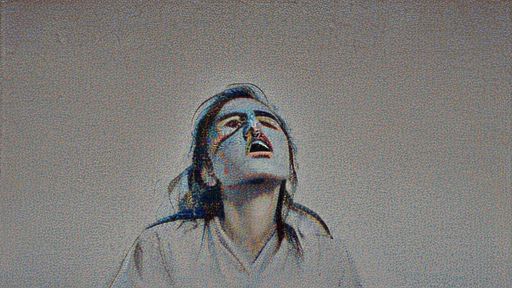

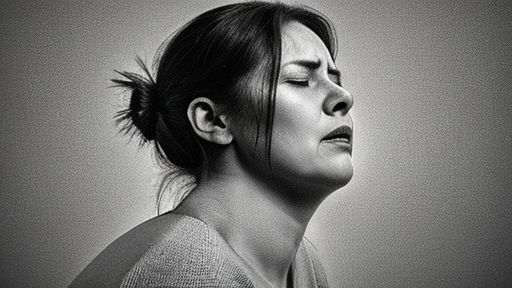

Ultimately, they look different and the problem might be of that the prompts are slightly different and but what I interpret the pictures generated from the pure noise is that it started out with a rather small figure at 45% of strength to alter the noise image, subsequently at 70%, it showed the weirdest pose and the head dislocated. The similarities that I can draw between the 2 images are that they of the same framing which is not showing any lower half of the body.